We have fallen for the folly of maximum GPU performance.

It is the age of 450 Watt GPUs. Why is that?

There is absolutely no need to have 450 Watt GPUs.

The sole reason they use that much, is for a few additional FPS in the benchmarks.

For real-world use, that 450 Watt is useless, as I have come to conclude after running an experiment.

Traditionally, I have always used NVIDIA GPUs in my Linux boxes, for stability and performance.

But today, I am running an (elderly) AMD RX580 in my main rig.

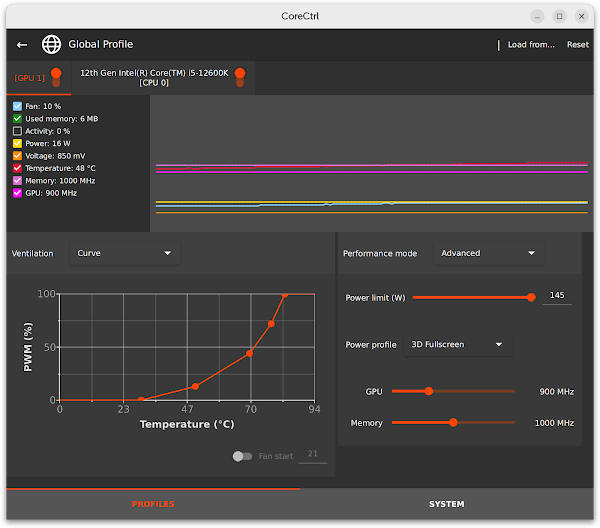

NVIDIA on Linux does not give you much control over clock speeds and voltages, but CoreCtrl let's me adjust the clocks for the RX580.

I already knew that for the last few percent of performance, you need a heap more wattage.

This is because Wattage increases linearly with clock speed, but quadratically to the Voltage.

Increasing Voltage comes at a huge cost. Let's quantify that cost.

I benchmarked my Photon Mapping engine on two settings:

900Mhz GPU, 1000 MHz VRAM versus: 1360MHz GPU, 1750 MHz VRAM.

I then checked the FPS and the (reported!) Wattage. I guess for a more complete tests, measuring power consumption at the wall socket would have been better. Maybe another time.

So, with the higher clocks, we expect better performance of course. Did we get better performance?

Clocks FPS Volt Watt

900/1000 38 0.850 47

1360/1750 46 1.150 115

The result was even more extreme than I expected:

For 145% more power consumption, I got to enjoy 21% more frames/s.

Not only that. The high-clock setting came with a deafening fan noise, whereas I did not hear a thing at low Voltage.

Which now makes me ask the question:

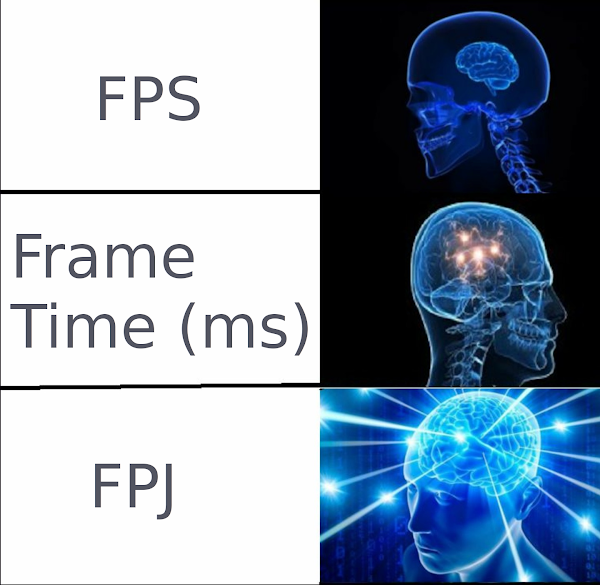

Shouldn't those benchmarking youtube channels do their tests differently?

Why are we not measuring --deep breath-- frames per second per (joule per second) instead? (As Watt is defined as Joule/Second.)

We can of course simplify those units as frames per joule, as the time units cancel each other out.

Hot take: LTT, JayzTwoCents, GamersNexus should all be benchmarking GPUs at at lower Voltage/Clock setting.

I do not care if RTX inches out a Radeon by 4% more FPS at 450Watt. I want to know how they perform with a silent fan, and lowered voltage. Consumer Report tests a car at highway speeds, not on a race track with Nitrogen Oxide and a bolted on Supercharger. We should test GPUs more reasonably.

Can we please have test reports with Frames per Joule? Where is my FPJ at?

UPDATE: I can't believe I missed the stage-4 galaxy brain.

We can invert that unit to Joules/Frame instead!